Oracle without mastery of tokens is destined for massive layoffs

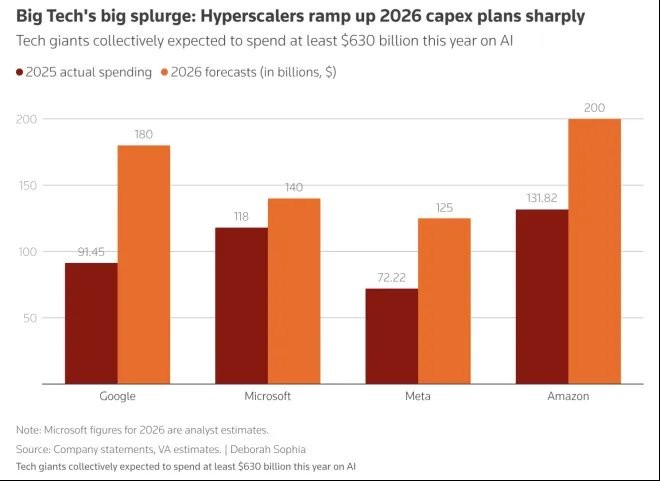

Oracle announced the launch of a new round of layoffs, involving thousands of employees, while planning to increase its annual capital expenditure to approximately $50 billion, mainly for AI infrastructure construction. This investment will cause the company's free cash flow to turn negative from $11.8 billion in 2024, expected to reach -$23 billion by 2026. Oracle's layoffs and cost control have become a trend in the AI era, with similar situations occurring in other AI infrastructure companies

Oracle suddenly announced layoffs in the early morning, not an April Fool's joke.

According to CNBC, Oracle has initiated a new round of layoffs affecting thousands of employees.

At the same time, it is investing hundreds of billions of dollars in building AI infrastructure.

Multiple industry media outlets have reported that Oracle plans to increase its annual capital expenditure to about $50 billion, primarily for data center and AI infrastructure construction.

This investment has already begun to erode the company's cash flow: TheStreet data shows that Oracle's free cash flow is expected to turn from about $11.8 billion in 2024 to negative, reaching -$23 billion by 2026. Additionally, Oracle's stock price has fallen about 25% this year, a decline greater than that of all tech giants.

On one side is the continuous expansion of AI investment, and on the other side are layoffs and cost control. This combination is not common among traditional software companies but is becoming a typical state for infrastructure companies in the AI era.

If you are in a company that deals with infrastructure, you should be cautious: the hotter AI gets, the more likely you are to be "optimized."

Oracle is just the latest example.

Oracle's layoffs are not an isolated case

Similar events are happening across the entire AI infrastructure chain.

Between 2025 and 2026, several companies within this chain have announced large-scale layoffs:

Intel announced layoffs of about 25,000 people in 2025 as part of its manufacturing and cost structure adjustments;

Amazon laid off about 16,000 people in early 2026;

Microsoft laid off about 9,000 people in mid-2025;

Block laid off over 4,000 people in early 2026.

These companies span different sub-sectors, including semiconductors, cloud computing, enterprise software, and payment infrastructure. While their layoffs each have specific reasons, there is a clear commonality: they are all supporting AI.

These companies are not peripheral participants in the AI wave; rather, they are among the first to take on the growth in AI demand. For example, cloud vendors handle model inference loads, chip manufacturers provide computational support, and enterprise software companies manage data and processes. As AI demand has grown, they have generally received more orders and higher usage— in other words, they have "made a lot of money" from AI.

But pressure has also come along, with the growth in orders and changes in cost structure occurring simultaneously.

Unlike the light asset logic of traditional software, AI infrastructure construction has a clear heavy asset attribute: the construction cycle of data centers is long, and capital intensity is high, with the procurement prices of core hardware like GPUs remaining high. A high-end computing card can cost tens of thousands of dollars, and large-scale training or inference deployments typically require thousands of them The cost of an AI data center is no longer a matter of "hundreds of millions of dollars," but rather an investment that easily reaches tens of billions or even hundreds of billions of dollars.

The sharp rise in capital expenditures forces these infrastructure companies to seek new balance points in their financial structures. In the face of AI investments, labor becomes the most easily adjustable cost.

A simple and direct choice begins to emerge:

Using labor costs to exchange for computing power costs.

AI dividends are being "redistributed"

To understand this change, we need to return to the value structure of the AI industry.

In the past software industry, value was often dispersed across multiple levels: including application layer, platform layer, middleware, and underlying infrastructure. Each layer could obtain a certain degree of pricing power through differentiated capabilities.

However, in the current AI cycle, this distribution is gradually concentrating. The value in the AI era can roughly be categorized into two types around tokens: one is generative capability, which is the model's ability to produce tokens; the other is consumption capability, which is the amount of tokens continuously generated by users during the inference phase.

In simple terms: the AI dividend is concentrating on models and tokens.

Companies that master model capabilities, such as OpenAI, Google DeepMind, and Anthropic, can directly define product forms and pricing structures; platforms with large-scale user entry points can achieve continuous revenue through token consumption.

Traditional infrastructure remains important, but it increasingly resembles "electricity" and "bandwidth"—essential but difficult to determine pricing.

A gradually clearer pattern begins to emerge: the closer one is to the generation and consumption of tokens, the higher the profit margin; the further away from this core, the more competition tends to focus on cost compression.

In other words, in the AI wave, those who control tokens control pricing power; those far from tokens can only focus on cost.

For most infrastructure companies, they neither control model capabilities nor user entry points. They play the role of a "support system," such as storing data, scheduling resources, providing operating environments, or building toolchains.

As technology moves from non-standard to standardization, and then from standardization to automation, the demand for labor will naturally decrease.

In the immature stage of technology, a large number of engineers and operations personnel are necessary because systems are complex and lack standardization; but as model capabilities improve, automation tools become widespread, and platform capabilities enhance, the work that originally required manual completion begins to be replaced by systems.

In this context, when companies need to reduce costs and improve efficiency, layoffs become almost an inevitable option—after all, labor is a continuous cost, while computing power is an upfront investment. Once the system is running stably, the scale of labor will be reassessed In the early stages of the technology cycle, companies frantically hire, and after technology matures, they lay off large numbers of employees, which has almost become the fate of infrastructure companies.

This process is not unique to the AI era: in the early days of cloud computing, companies also experienced a transition from rapid expansion to efficiency optimization. However, the pace of AI development is noticeably faster.

The synchronous evolution of model capabilities, tool ecosystems, and hardware capabilities in a short period has directly compressed the process of efficiency improvement. Cloud computing took about ten years to achieve standardization and scaling, while AI may only need three years.

Another Option is Emerging

For those infrastructure companies heavily investing in AI, replacing some human labor with computing power may seem cold-blooded, but it is also a rational choice.

However, looking at the bigger picture, jobs have not disappeared entirely; they have migrated between different levels.

In the past few years, a large number of jobs have revolved around infrastructure, including system maintenance, data processing, process management, and tool development. With the addition of AI, some of these jobs have begun to be automated.

At the same time, the demand for positions directly involved in model development, application building, or product innovation is continuously increasing.

In this change, some practitioners face uncertainty, while other companies see opportunities and are ready to "pick up the pieces."

For example, WHOOP, a company focused on health and wearable devices, is expanding its team against the trend, planning to hire about 600 people.

WHOOP's CEO Ahmed stated, "This may be one of the best talent markets in history, with many excellent talents currently unemployed or working in companies that constantly talk about how they will be replaced by AI."

"Great teams will use great tools to create great products. We see a vast ocean of opportunities in health, fitness, balance, and medical functions. Rather than saying, 'Oh, how do we become so efficient in the next 12 months,' we are saying, 'How do we shorten a 3 to 5-year research roadmap to 12 to 24 months?' So, this makes us more ambitious, and I think that's the most exciting part right now."

This judgment is part of a completely different mindset from the infrastructure companies that are laying off employees.

For those companies focused on products and applications, AI is not just about saving money (although it can), but about improving efficiency: it allows the same team to accomplish what would originally take years in a shorter time, thus launching products faster and iterating continuously.

In this case, the role of humans has not been replaced; rather, it has been amplified by AI— the same people can do more, faster, and more complex things.

Therefore, you will see that AI brings about completely different results under different mindsets: for some companies, AI means reducing costs and improving efficiency; for others, it means accelerating innovation and expanding boundaries For practitioners, this change also has practical implications.

In the AI ecosystem, work can be roughly divided into three categories: directly creating content and capabilities (models, algorithms, agents); amplifying and applying capabilities (products, application layer); providing support and infrastructure (systems, tools, operations).

As AI capabilities enhance, the substitutability of the third category of work is increasing. This does not mean that these positions lack value; rather, their value is harder to translate into premiums.

For practitioners, the key issue is no longer limited to the technology itself, but rather the industry position—what determines your stability is not your capability, but how close you are to the core value of AI.

The distance between positions and value creation will directly affect stability and development space.

As the technology cycle accelerates, organizational structures and job structures also change. Layoffs and hiring occur simultaneously, becoming two sides of the same era.

In facing the question of "Will AI replace human labor?", we might consider: is this company using AI to save money, or is it using AI to make money?

AI will not directly determine whether you will be replaced, but it will determine whether your position is still worth retaining.

Risk Warning and Disclaimer

The market has risks, and investment requires caution. This article does not constitute personal investment advice and does not take into account the specific investment goals, financial situation, or needs of individual users. Users should consider whether any opinions, views, or conclusions in this article align with their specific circumstances. Investment based on this is at your own risk